Configuring and managing logical volumes

Configuring and managing the LVM on RHEL

Résumé

Rendre l'open source plus inclusif

Red Hat s'engage à remplacer les termes problématiques dans son code, sa documentation et ses propriétés Web. Nous commençons par ces quatre termes : master, slave, blacklist et whitelist. En raison de l'ampleur de cette entreprise, ces changements seront mis en œuvre progressivement au cours de plusieurs versions à venir. Pour plus de détails, voir le message de notre directeur technique Chris Wright.

Fournir un retour d'information sur la documentation de Red Hat

Nous apprécions vos commentaires sur notre documentation. Faites-nous savoir comment nous pouvons l'améliorer.

Soumettre des commentaires sur des passages spécifiques

- Consultez la documentation au format Multi-page HTML et assurez-vous que le bouton Feedback apparaît dans le coin supérieur droit après le chargement complet de la page.

- Utilisez votre curseur pour mettre en évidence la partie du texte que vous souhaitez commenter.

- Cliquez sur le bouton Add Feedback qui apparaît près du texte en surbrillance.

- Ajoutez vos commentaires et cliquez sur Submit.

Soumettre des commentaires via Bugzilla (compte requis)

- Connectez-vous au site Web de Bugzilla.

- Sélectionnez la version correcte dans le menu Version.

- Saisissez un titre descriptif dans le champ Summary.

- Saisissez votre suggestion d'amélioration dans le champ Description. Incluez des liens vers les parties pertinentes de la documentation.

- Cliquez sur Submit Bug.

Chapitre 1. Overview of logical volume management

Logical volume management (LVM) creates a layer of abstraction over physical storage, which helps you to create logical storage volumes. This provides much greater flexibility in a number of ways than using physical storage directly.

In addition, the hardware storage configuration is hidden from the software so it can be resized and moved without stopping applications or unmounting file systems. This can reduce operational costs.

1.1. LVM architecture

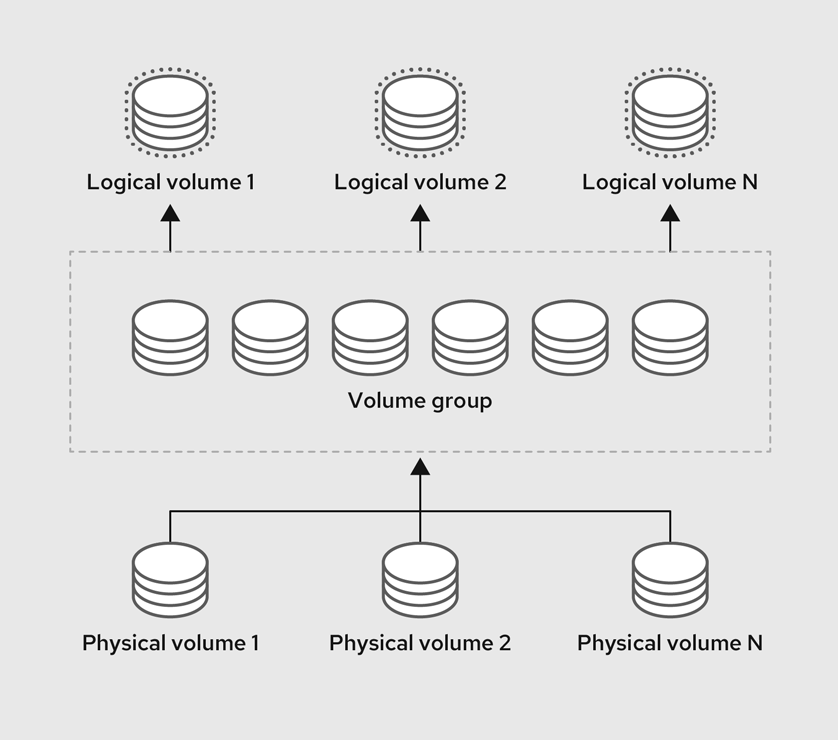

The following are the components of LVM:

- Physical volume

- A physical volume (PV) is a partition or whole disk designated for LVM use. For more information, see Managing LVM physical volumes.

- Volume group

- A volume group (VG) is a collection of physical volumes (PVs), which creates a pool of disk space out of which logical volumes can be allocated. For more information, see Managing LVM volume groups.

- Logical volume

- A logical volume represents a mountable storage device. For more information, see Managing LVM logical volumes.

The following diagram illustrates the components of LVM:

Figure 1.1. LVM logical volume components

1.2. Advantages of LVM

Logical volumes provide the following advantages over using physical storage directly:

- Flexible capacity

- When using logical volumes, you can aggregate devices and partitions into a single logical volume. With this functionality, file systems can extend across multiple devices as though they were a single, large one.

- Resizeable storage volumes

- You can extend logical volumes or reduce logical volumes in size with simple software commands, without reformatting and repartitioning the underlying devices.

- Online data relocation

- To deploy newer, faster, or more resilient storage subsystems, you can move data while your system is active. Data can be rearranged on disks while the disks are in use. For example, you can empty a hot-swappable disk before removing it.

- Convenient device naming

- Logical storage volumes can be managed with user-defined and custom names.

- Striped Volumes

- You can create a logical volume that stripes data across two or more devices. This can dramatically increase throughput.

- RAID volumes

- Logical volumes provide a convenient way to configure RAID for your data. This provides protection against device failure and improves performance.

- Volume snapshots

- You can take snapshots, which is a point-in-time copy of logical volumes for consistent backups or to test the effect of changes without affecting the real data.

- Thin volumes

- Logical volumes can be thinly provisioned. This allows you to create logical volumes that are larger than the available physical space.

- Cache volumes

- A cache logical volume uses a fast block device, such as an SSD drive to improve the performance of a larger and slower block device.

Chapitre 2. Managing LVM physical volumes

The physical volume (PV) is a partition or whole disk designated for LVM use. To use the device for an LVM logical volume, the device must be initialized as a physical volume.

If you are using a whole disk device for your physical volume, the disk must have no partition table. For DOS disk partitions, the partition id should be set to 0x8e using the fdisk or cfdisk command or an equivalent. If you are using a whole disk device for your physical volume, the disk must have no partition table. Any existing partition table must be erased, which will effectively destroy all data on that disk. You can remove an existing partition table using the wipefs -a <PhysicalVolume>` command as root.

2.1. Overview of physical volumes

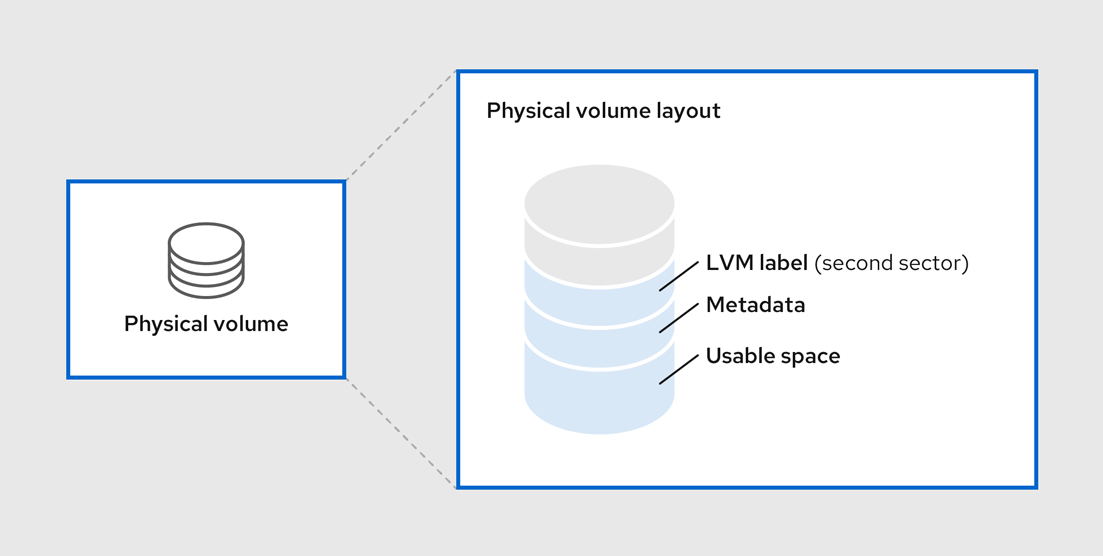

Initializing a block device as a physical volume places a label near the start of the device. The following describes the LVM label:

- An LVM label provides correct identification and device ordering for a physical device. An unlabeled, non-LVM device can change names across reboots depending on the order they are discovered by the system during boot. An LVM label remains persistent across reboots and throughout a cluster.

- The LVM label identifies the device as an LVM physical volume. It contains a random unique identifier, the UUID for the physical volume. It also stores the size of the block device in bytes, and it records where the LVM metadata will be stored on the device.

- By default, the LVM label is placed in the second 512-byte sector. You can overwrite this default setting by placing the label on any of the first 4 sectors when you create the physical volume. This allows LVM volumes to co-exist with other users of these sectors, if necessary.

The following describes the LVM metadata:

- The LVM metadata contains the configuration details of the LVM volume groups on your system. By default, an identical copy of the metadata is maintained in every metadata area in every physical volume within the volume group. LVM metadata is small and stored as ASCII.

- Currently LVM allows you to store 0, 1, or 2 identical copies of its metadata on each physical volume. The default is 1 copy. Once you configure the number of metadata copies on the physical volume, you cannot change that number at a later time. The first copy is stored at the start of the device, shortly after the label. If there is a second copy, it is placed at the end of the device. If you accidentally overwrite the area at the beginning of your disk by writing to a different disk than you intend, a second copy of the metadata at the end of the device will allow you to recover the metadata.

The following diagram illustrates the layout of an LVM physical volume. The LVM label is on the second sector, followed by the metadata area, followed by the usable space on the device.

In the Linux kernel and throughout this document, sectors are considered to be 512 bytes in size.

Figure 2.1. Physical volume layout

Ressources supplémentaires

2.2. Multiple partitions on a disk

You can create physical volumes (PV) out of disk partitions by using LVM.

Red Hat recommends that you create a single partition that covers the whole disk to label as an LVM physical volume for the following reasons:

- Administrative convenience

- It is easier to keep track of the hardware in a system if each real disk only appears once. This becomes particularly true if a disk fails.

- Striping performance

- LVM cannot tell that two physical volumes are on the same physical disk. If you create a striped logical volume when two physical volumes are on the same physical disk, the stripes could be on different partitions on the same disk. This would result in a decrease in performance rather than an increase.

- RAID redundancy

- LVM cannot determine that the two physical volumes are on the same device. If you create a RAID logical volume when two physical volumes are on the same device, performance and fault tolerance could be lost.

Although it is not recommended, there may be specific circumstances when you will need to divide a disk into separate LVM physical volumes. For example, on a system with few disks it may be necessary to move data around partitions when you are migrating an existing system to LVM volumes. Additionally, if you have a very large disk and want to have more than one volume group for administrative purposes then it is necessary to partition the disk. If you do have a disk with more than one partition and both of those partitions are in the same volume group, take care to specify which partitions are to be included in a logical volume when creating volumes.

Note that although LVM supports using a non-partitioned disk as physical volume, it is recommended to create a single, whole-disk partition because creating a PV without a partition can be problematic in a mixed operating system environment. Other operating systems may interpret the device as free, and overwrite the PV label at the beginning of the drive.

2.3. Creating LVM physical volume

This procedure describes how to create and label LVM physical volumes (PVs).

In this procedure, replace the /dev/vdb1, /dev/vdb2, and /dev/vdb3 with the available storage devices in your system.

Conditions préalables

-

The

lvm2package is installed.

Procédure

Create multiple physical volumes by using the space-delimited device names as arguments to the

pvcreatecommand:# pvcreate /dev/vdb1 /dev/vdb2 /dev/vdb3 Physical volume "/dev/vdb1" successfully created. Physical volume "/dev/vdb2" successfully created. Physical volume "/dev/vdb3" successfully created.

This places a label on /dev/vdb1, /dev/vdb2, and /dev/vdb3, marking them as physical volumes belonging to LVM.

View the created physical volumes by using any one of the following commands as per your requirement:

The

pvdisplaycommand, which provides a verbose multi-line output for each physical volume. It displays physical properties, such as size, extents, volume group, and other options in a fixed format:# pvdisplay --- NEW Physical volume --- PV Name /dev/vdb1 VG Name PV Size 1.00 GiB [..] --- NEW Physical volume --- PV Name /dev/vdb2 VG Name PV Size 1.00 GiB [..] --- NEW Physical volume --- PV Name /dev/vdb3 VG Name PV Size 1.00 GiB [..]

The

pvscommand provides physical volume information in a configurable form, displaying one line per physical volume:# pvs PV VG Fmt Attr PSize PFree /dev/vdb1 lvm2 1020.00m 0 /dev/vdb2 lvm2 1020.00m 0 /dev/vdb3 lvm2 1020.00m 0

The

pvscancommand scans all supported LVM block devices in the system for physical volumes. You can define a filter in thelvm.conffile so that this command avoids scanning specific physical volumes:# pvscan PV /dev/vdb1 lvm2 [1.00 GiB] PV /dev/vdb2 lvm2 [1.00 GiB] PV /dev/vdb3 lvm2 [1.00 GiB]

Ressources supplémentaires

-

pvcreate(8),pvdisplay(8),pvs(8),pvscan(8), andlvm(8)man pages

2.4. Removing LVM physical volumes

If a device is no longer required for use by LVM, you can remove the LVM label by using the pvremove command. Executing the pvremove command zeroes the LVM metadata on an empty physical volume.

Procédure

Remove a physical volume:

# pvremove /dev/vdb3 Labels on physical volume "/dev/vdb3" successfully wiped.

View the existing physical volumes and verify if the required volume is removed:

# pvs PV VG Fmt Attr PSize PFree /dev/vdb1 lvm2 1020.00m 0 /dev/vdb2 lvm2 1020.00m 0

If the physical volume you want to remove is currently part of a volume group, you must remove it from the volume group with the vgreduce command. For more information, see Removing physical volumes from a volume group

Ressources supplémentaires

-

pvremove(8)man page

2.5. Ressources supplémentaires

- Creating a partition table on a disk with parted.

-

parted(8)man page.

Chapitre 3. Managing LVM volume groups

A volume group (VG) is a collection of physical volumes (PVs), which creates a pool of disk space out of which logical volumes (LVs) can be allocated.

Within a volume group, the disk space available for allocation is divided into units of a fixed-size called extents. An extent is the smallest unit of space that can be allocated. Within a physical volume, extents are referred to as physical extents.

A logical volume is allocated into logical extents of the same size as the physical extents. The extent size is therefore the same for all logical volumes in the volume group. The volume group maps the logical extents to physical extents.

3.1. Creating LVM volume group

This procedure describes how to create an LVM volume group (VG) myvg, by using the /dev/vdb1 and /dev/vdb2 physical volumes.

Conditions préalables

-

The

lvm2package is installed. - One or more physical volumes are created. For more information about creating physical volumes, see Creating LVM physical volume.

Procédure

Create a volume group:

# vgcreate myvg /dev/vdb1 /dev/vdb2 Volume group "myvg" successfully created.

This creates a VG with the name of myvg. The PVs /dev/vdb1 and /dev/vdb2 are the base storage level for the myvg VG .

View the created volume groups by using any one of the following commands according to your requirement:

The

vgscommand provides volume group information in a configurable form, displaying one line per volume groups:# vgs VG #PV #LV #SN Attr VSize VFree myvg 2 0 0 wz-n 159.99g 159.99gThe

vgdisplaycommand displays volume group properties such as size, extents, number of physical volumes, and other options in a fixed form. The following example shows the output of thevgdisplaycommand for the volume group myvg. To display all existing volume groups, do not specify a volume group:# vgdisplay myvg --- Volume group --- VG Name myvg System ID Format lvm2 Metadata Areas 4 Metadata Sequence No 6 VG Access read/write [..]

The

vgscancommand scans all supported LVM block devices in the system for volume group:# vgscan Found volume group "myvg" using metadata type lvm2

Optional: Increase a volume group’s capacity by adding one or more free physical volumes:

# vgextend myvg /dev/vdb3 Physical volume "/dev/vdb3" successfully created. Volume group "myvg" successfully extended

Optional: Rename an existing volume group:

# vgrename myvg myvg1 Volume group "myvg" successfully renamed to "myvg1"

Ressources supplémentaires

-

vgcreate(8),vgextend(8),vgdisplay(8),vgs(8),vgscan(8),vgrename(8), andlvm(8)man pages

3.2. Combining LVM volume groups

To combine two volume groups into a single volume group, use the vgmerge command. You can merge an inactive "source" volume with an active or an inactive "destination" volume if the physical extent sizes of the volume are equal and the physical and logical volume summaries of both volume groups fit into the destination volume groups limits.

Procédure

Merge the inactive volume group databases into the active or inactive volume group myvg giving verbose runtime information:

# vgmerge -v myvg databases

Ressources supplémentaires

-

vgmerge(8)man page

3.3. Removing physical volumes from a volume group

To remove unused physical volumes from a volume group, use the vgreduce command. The vgreduce command shrinks a volume group’s capacity by removing one or more empty physical volumes. This frees those physical volumes to be used in different volume groups or to be removed from the system.

Procédure

If the physical volume is still being used, migrate the data to another physical volume from the same volume group :

# pvmove /dev/vdb3 /dev/vdb3: Moved: 2.0% ... /dev/vdb3: Moved: 79.2% ... /dev/vdb3: Moved: 100.0%

If there are no enough free extents on the other physical volumes in the existing volume group:

Create a new physical volume from /dev/vdb4:

# pvcreate /dev/vdb4 Physical volume "/dev/vdb4" successfully created

Add the newly created physical volume to the myvg volume group:

# vgextend myvg /dev/vdb4 Volume group "myvg" successfully extended

Move the data from /dev/vdb3 to /dev/vdb4:

# pvmove /dev/vdb3 /dev/vdb4 /dev/vdb3: Moved: 33.33% /dev/vdb3: Moved: 100.00%

Remove the physical volume /dev/vdb3 from the volume group:

# vgreduce myvg /dev/vdb3 Removed "/dev/vdb3" from volume group "myvg"

Vérification

Verify if the /dev/vdb3 physical volume is removed from the myvg volume group:

# pvs PV VG Fmt Attr PSize PFree Used /dev/vdb1 myvg lvm2 a-- 1020.00m 0 1020.00m /dev/vdb2 myvg lvm2 a-- 1020.00m 0 1020.00m /dev/vdb3 lvm2 a-- 1020.00m 1008.00m 12.00m

Ressources supplémentaires

-

vgreduce(8),pvmove(8), andpvs(8)man pages

3.4. Splitting a LVM volume group

If there is enough unused space on the physical volumes, a new volume group can be created without adding new disks.

In the initial setup, the volume group myvg consists of /dev/vdb1, /dev/vdb2, and /dev/vdb3. After completing this procedure, the volume group myvg will consist of /dev/vdb1 and /dev/vdb2, and the second volume group, yourvg, will consist of /dev/vdb3.

Conditions préalables

-

You have sufficient space in the volume group. Use the

vgscancommand to determine how much free space is currently available in the volume group. -

Depending on the free capacity in the existing physical volume, move all the used physical extents to other physical volume using the

pvmovecommand. For more information, see Removing physical volumes from a volume group.

Procédure

Split the existing volume group myvg to the new volume group yourvg:

# vgsplit myvg yourvg /dev/vdb3 Volume group "yourvg" successfully split from "myvg"

NoteIf you have created a logical volume using the existing volume group, use the following command to deactivate the logical volume:

# lvchange -a n /dev/myvg/mylvFor more information on creating logical volumes, see Managing LVM logical volumes.

View the attributes of the two volume group:

# vgs VG #PV #LV #SN Attr VSize VFree myvg 2 1 0 wz--n- 34.30G 10.80G yourvg 1 0 0 wz--n- 17.15G 17.15G

Vérification

Verify if the newly created volume group yourvg consists of /dev/vdb3 physical volume:

# pvs PV VG Fmt Attr PSize PFree Used /dev/vdb1 myvg lvm2 a-- 1020.00m 0 1020.00m /dev/vdb2 myvg lvm2 a-- 1020.00m 0 1020.00m /dev/vdb3 yourvg lvm2 a-- 1020.00m 1008.00m 12.00m

Ressources supplémentaires

-

vgsplit(8),vgs(8), andpvs(8)man pages

Chapitre 4. Managing LVM logical volumes

A logical volume is a virtual, block storage device that a file system, database, or application can use. To create an LVM logical volume, the physical volumes (PVs) are combined into a volume group (VG). This creates a pool of disk space out of which LVM logical volumes (LVs) can be allocated.

4.1. Overview of logical volumes

An administrator can grow or shrink logical volumes without destroying data, unlike standard disk partitions. If the physical volumes in a volume group are on separate drives or RAID arrays, then administrators can also spread a logical volume across the storage devices.

You can lose data if you shrink a logical volume to a smaller capacity than the data on the volume requires. Further, some file systems are not capable of shrinking. To ensure maximum flexibility, create logical volumes to meet your current needs, and leave excess storage capacity unallocated. You can safely extend logical volumes to use unallocated space, depending on your needs.

On AMD, Intel, ARM systems, and IBM Power Systems servers, the boot loader cannot read LVM volumes. You must make a standard, non-LVM disk partition for your /boot partition. On IBM Z, the zipl boot loader supports /boot on LVM logical volumes with linear mapping. By default, the installation process always creates the / and swap partitions within LVM volumes, with a separate /boot partition on a physical volume.

The following are the different types of logical volumes:

- Linear volumes

- A linear volume aggregates space from one or more physical volumes into one logical volume. For example, if you have two 60GB disks, you can create a 120GB logical volume. The physical storage is concatenated.

- Striped logical volumes

When you write data to an LVM logical volume, the file system lays the data out across the underlying physical volumes. You can control the way the data is written to the physical volumes by creating a striped logical volume. For large sequential reads and writes, this can improve the efficiency of the data I/O.

Striping enhances performance by writing data to a predetermined number of physical volumes in round-robin fashion. With striping, I/O can be done in parallel. In some situations, this can result in near-linear performance gain for each additional physical volume in the stripe.

- RAID logical volumes

- LVM supports RAID levels 0, 1, 4, 5, 6, and 10. RAID logical volumes are not cluster-aware. When you create a RAID logical volume, LVM creates a metadata subvolume that is one extent in size for every data or parity subvolume in the array.

- Thin-provisioned logical volumes (thin volumes)

- Using thin-provisioned logical volumes, you can create logical volumes that are larger than the available physical storage. Creating a thinly provisioned set of volumes allows the system to allocate what you use instead of allocating the full amount of storage that is requested

- Snapshot volumes

- The LVM snapshot feature provides the ability to create virtual images of a device at a particular instant without causing a service interruption. When a change is made to the original device (the origin) after a snapshot is taken, the snapshot feature makes a copy of the changed data area as it was prior to the change so that it can reconstruct the state of the device.

- Thin-provisioned snapshot volumes

- Using thin-provisioned snapshot volumes, you can have more virtual devices to be stored on the same data volume. Thinly provisioned snapshots are useful because you are not copying all of the data that you are looking to capture at a given time.

- Cache volumes

- LVM supports the use of fast block devices, such as SSD drives as write-back or write-through caches for larger slower block devices. Users can create cache logical volumes to improve the performance of their existing logical volumes or create new cache logical volumes composed of a small and fast device coupled with a large and slow device.

4.2. Creating LVM logical volume

This procedure describes how to create mylv LVM logical volume (LV) from the myvg volume group, which is created by using the /dev/vdb1, /dev/vdb2, and /dev/vdb3 physical volumes.

Conditions préalables

-

The

lvm2package is installed. - The volume group is created. For more information, see Creating LVM volume group.

Procédure

Create a logical volume:

# lvcreate -n mylv -L 500M myvg

Use the

-noption to set the LV name to mylv, and the-Loption to set the size of LV in units of Mb, but it is possible to use any other units. The LV type is linear by default, but the user can specify the desired type by using the--typeoption.ImportantThe command fails if the VG does not have a sufficient number of free physical extents for the requested size and type.

View the created logical volumes by using any one of the following commands as per your requirement:

The

lvscommand provides logical volume information in a configurable form, displaying one line per logical volume:# lvs LV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convert mylv myvg -wi-ao---- 500.00mThe

lvdisplaycommand displays logical volume properties, such as size, layout, and mapping in a fixed format:# lvdisplay -v /dev/myvg/mylv --- Logical volume --- LV Path /dev/myvg/mylv LV Name mylv VG Name myvg LV UUID YTnAk6-kMlT-c4pG-HBFZ-Bx7t-ePMk-7YjhaM LV Write Access read/write [..]

The

lvscancommand scans for all logical volumes in the system and lists them:# lvscan ACTIVE '/dev/myvg/mylv' [500.00 MiB] inherit

Create a file system on the logical volume. The following command creates an

xfsfile system on the logical volume:# mkfs.xfs /dev/myvg/mylv meta-data=/dev/myvg/mylv isize=512 agcount=4, agsize=32000 blks = sectsz=512 attr=2, projid32bit=1 = crc=1 finobt=1, sparse=1, rmapbt=0 = reflink=1 data = bsize=4096 blocks=128000, imaxpct=25 = sunit=0 swidth=0 blks naming =version 2 bsize=4096 ascii-ci=0, ftype=1 log =internal log bsize=4096 blocks=1368, version=2 = sectsz=512 sunit=0 blks, lazy-count=1 realtime =none extsz=4096 blocks=0, rtextents=0 Discarding blocks...Done.

Mount the logical volume and report the file system disk space usage:

# mount /dev/myvg/mylv /mnt # df -h Filesystem 1K-blocks Used Available Use% Mounted on /dev/mapper/myvg-mylv 506528 29388 477140 6% /mnt

Ressources supplémentaires

-

lvcreate(8),lvdisplay(8),lvs(8),lvscan(8),lvm(8)andmkfs.xfs(8)man pages

4.3. Creating a RAID0 striped logical volume

A RAID0 logical volume spreads logical volume data across multiple data subvolumes in units of stripe size. The following procedure creates an LVM RAID0 logical volume called mylv that stripes data across the disks.

Conditions préalables

- You have created three or more physical volumes. For more information on creating physical volumes, see Creating LVM physical volume.

- You have created the volume group. For more information, see Creating LVM volume group.

Procédure

Create a RAID0 logical volume from the existing volume group. The following command creates the RAID0 volume mylv from the volume group myvg, which is 2G in size, with three stripes and a stripe size of 4kB:

# lvcreate --type raid0 -L 2G --stripes 3 --stripesize 4 -n mylv my_vg Rounding size 2.00 GiB (512 extents) up to stripe boundary size 2.00 GiB(513 extents). Logical volume "mylv" created.

Create a file system on the RAID0 logical volume. The following command creates an ext4 file system on the logical volume:

# mkfs.ext4 /dev/my_vg/mylvMount the logical volume and report the file system disk space usage:

# mount /dev/my_vg/mylv /mnt # df Filesystem 1K-blocks Used Available Use% Mounted on /dev/mapper/my_vg-mylv 2002684 6168 1875072 1% /mnt

Vérification

View the created RAID0 stripped logical volume:

# lvs -a -o +devices,segtype my_vg LV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convert Devices Type mylv my_vg rwi-a-r--- 2.00g mylv_rimage_0(0),mylv_rimage_1(0),mylv_rimage_2(0) raid0 [mylv_rimage_0] my_vg iwi-aor--- 684.00m /dev/sdf1(0) linear [mylv_rimage_1] my_vg iwi-aor--- 684.00m /dev/sdg1(0) linear [mylv_rimage_2] my_vg iwi-aor--- 684.00m /dev/sdh1(0) linear

4.4. Renaming LVM logical volumes

This procedure describes how to rename an existing logical volume mylv to mylv1.

Procédure

If the logical volume is currently mounted, unmount the volume:

# umount /mntReplace /mnt with the mount point.

Rename an existing logical volume:

# lvrename myvg mylv mylv1 Renamed "mylv" to "mylv1" in volume group "myvg"

You can also rename the logical volume by specifying the full paths to the devices:

# lvrename /dev/myvg/mylv /dev/myvg/mylv1

Ressources supplémentaires

-

lvrename(8)man page

4.5. Removing a disk from a logical volume

This procedure describes how to remove a disk from an existing logical volume, either to replace the disk or to use the disk as part of a different volume.

In order to remove a disk, you must first move the extents on the LVM physical volume to a different disk or set of disks.

Procédure

View the used and free space of physical volumes when using the LV:

# pvs -o+pv_used PV VG Fmt Attr PSize PFree Used /dev/vdb1 myvg lvm2 a-- 1020.00m 0 1020.00m /dev/vdb2 myvg lvm2 a-- 1020.00m 0 1020.00m /dev/vdb3 myvg lvm2 a-- 1020.00m 1008.00m 12.00m

Move the data to other physical volume:

If there are enough free extents on the other physical volumes in the existing volume group, use the following command to move the data:

# pvmove /dev/vdb3 /dev/vdb3: Moved: 2.0% ... /dev/vdb3: Moved: 79.2% ... /dev/vdb3: Moved: 100.0%

If there are no enough free extents on the other physical volumes in the existing volume group, use the following commands to add a new physical volume, extend the volume group using the newly created physical volume, and move the data to this physical volume:

# pvcreate /dev/vdb4 Physical volume "/dev/vdb4" successfully created # vgextend myvg /dev/vdb4 Volume group "myvg" successfully extended # pvmove /dev/vdb3 /dev/vdb4 /dev/vdb3: Moved: 33.33% /dev/vdb3: Moved: 100.00%

Remove the physical volume:

# vgreduce myvg /dev/vdb3 Removed "/dev/vdb3" from volume group "myvg"

If a logical volume contains a physical volume that fails, you cannot use that logical volume. To remove missing physical volumes from a volume group, you can use the

--removemissingparameter of thevgreducecommand, if there are no logical volumes that are allocated on the missing physical volumes:# vgreduce --removemissing myvg

Ressources supplémentaires

-

pvmove(8),vgextend(8),vereduce(8), andpvs(8)man pages

4.6. Removing LVM logical volumes

This procedure describes how to remove an existing logical volume /dev/myvg/mylv1 from the volume group myvg.

Procédure

If the logical volume is currently mounted, unmount the volume:

# umount /mntIf the logical volume exists in a clustered environment, deactivate the logical volume on all nodes where it is active. Use the following command on each such node:

# lvchange --activate n vg-name/lv-nameRemove the logical volume using the

lvremoveutility:# lvremove /dev/myvg/mylv1 Do you really want to remove active logical volume "mylv1"? [y/n]: y Logical volume "mylv1" successfully removed

NoteIn this case, the logical volume has not been deactivated. If you explicitly deactivated the logical volume before removing it, you would not see the prompt verifying whether you want to remove an active logical volume.

Ressources supplémentaires

-

lvremove(8)man page

4.7. Managing LVM logical volumes using RHEL System Roles

Use the storage role to perform the following tasks:

- Create an LVM logical volume in a volume group consisting of multiple disks.

- Create an ext4 file system with a given label on the logical volume.

- Persistently mount the ext4 file system.

Conditions préalables

-

An Ansible playbook including the

storagerole

4.7.1. Exemple de script Ansible pour la gestion des volumes logiques

Cette section fournit un exemple de manuel de jeu Ansible. Ce playbook applique le rôle storage pour créer un volume logique LVM dans un groupe de volumes.

Exemple 4.1. Un playbook qui crée un volume logique mylv dans le groupe de volumes myvg

- hosts: all

vars:

storage_pools:

- name: myvg

disks:

- sda

- sdb

- sdc

volumes:

- name: mylv

size: 2G

fs_type: ext4

mount_point: /mnt/data

roles:

- rhel-system-roles.storageLe groupe de volumes

myvgse compose des disques suivants :-

/dev/sda -

/dev/sdb -

/dev/sdc

-

-

Si le groupe de volumes

myvgexiste déjà, la procédure ajoute le volume logique au groupe de volumes. -

Si le groupe de volumes

myvgn'existe pas, le playbook le crée. -

La procédure crée un système de fichiers Ext4 sur le volume logique

mylvet monte de manière persistante le système de fichiers à l'adresse/mnt.

Ressources supplémentaires

-

Le fichier

/usr/share/ansible/roles/rhel-system-roles.storage/README.md.

4.7.2. Ressources supplémentaires

-

For more information about the

storagerole, see Managing local storage using RHEL System Roles.

4.8. Removing LVM volume groups

This procedure describes how to remove an existing volume group.

Conditions préalables

- The volume group contains no logical volumes. To remove logical volumes from a volume group, see Removing LVM logical volumes.

Procédure

If the volume group exists in a clustered environment, stop the

lockspaceof the volume group on all other nodes. Use the following command on all nodes except the node where you are performing the removing:# vgchange --lockstop vg-nameWait for the lock to stop.

Remove the volume group:

# vgremove vg-name Volume group "vg-name" successfully removed

Ressources supplémentaires

-

vgremove(8)man page

Chapitre 5. Modifying the size of a logical volume

After you have created a logical volume, you can modify the size of the volume.

5.1. Growing a logical volume and file system

This procedure describes how to extend the logical volume and grow a file system on the same logical volume.

To increase the size of a logical volume, use the lvextend command. When you extend the logical volume, you can indicate how much you want to extend the volume, or how large you want it to be after you extend it.

Conditions préalables

You have an existing logical volume (LV) with a file system on it. Determine the file system type by using the

df -Thcommand.For more information on creating LV and a file system, see Creating LVM logical volume.

-

You have sufficient space in the volume group to grow your LV and file system. Use the

vgs -o name,vgfreecommand to determine the available space.

Procédure

Optional: If the volume group has insufficient space to grow your LV, then add a new physical volume to the volume group by using the following command:

# vgextend myvg /dev/vdb3 Physical volume "/dev/vdb3" successfully created. Volume group "myvg" successfully extended

For more information, see Creating LVM volume group.

Now that the volume group is large enough, execute any one of the following steps as per your requirement:

To extend the LV with the provided size, use the following command:

# lvextend -L 3G /dev/myvg/mylv Size of logical volume myvg/mylv changed from 2.00 GiB (512 extents) to 3.00 GiB (768 extents). Logical volume myvg/mylv successfully resized.

NoteYou can use the

-roption of thelvextendcommand to extend the logical volume and resize the underlying file system with a single command:# lvextend -r -L 3G /dev/myvg/mylvAvertissementYou can also extend the logical volume using the

lvresizecommand with the same arguments, but this command does not guarantee against accidental shrinkage.To extend the mylv logical volume to fill all of the unallocated space in the myvg volume group, use the following command:

# lvextend -l +100%FREE /dev/myvg/mylv Size of logical volume myvg/mylv changed from 10.00 GiB (2560 extents) to 6.35 TiB (1665465 extents). Logical volume myvg/mylv successfully resized.

As with the

lvcreatecommand, you can use the-largument of thelvextendcommand to specify the number of extents by which to increase the size of the logical volume. You can also use this argument to specify a percentage of the volume group, or a percentage of the remaining free space in the volume group.

If you are not using the

roption with thelvextendcommand to extend the LV and resize the file system with a single command, then resize the file system on the logical volume by using the following command:xfs_growfs /mnt/mnt1/ meta-data=/dev/mapper/myvg-mylv isize=512 agcount=4, agsize=65536 blks = sectsz=512 attr=2, projid32bit=1 = crc=1 finobt=1, sparse=1, rmapbt=0 = reflink=1 data = bsize=4096 blocks=262144, imaxpct=25 = sunit=0 swidth=0 blks naming =version 2 bsize=4096 ascii-ci=0, ftype=1 log =internal log bsize=4096 blocks=2560, version=2 = sectsz=512 sunit=0 blks, lazy-count=1 realtime =none extsz=4096 blocks=0, rtextents=0 data blocks changed from 262144 to 524288

NoteWithout the

-Doption,xfs_growfsgrows the file system to the maximum size supported by the underlying device. For more information, see Increasing the size of an XFS file system.For resizing an ext4 file system, see Resizing an ext4 file system.

Vérification

Verify if the file system is growing by using the following command:

# df -Th Filesystem Type Size Used Avail Use% Mounted on devtmpfs devtmpfs 1.9G 0 1.9G 0% /dev tmpfs tmpfs 1.9G 0 1.9G 0% /dev/shm tmpfs tmpfs 1.9G 8.6M 1.9G 1% /run tmpfs tmpfs 1.9G 0 1.9G 0% /sys/fs/cgroup /dev/mapper/rhel-root xfs 45G 3.7G 42G 9% / /dev/vda1 xfs 1014M 369M 646M 37% /boot tmpfs tmpfs 374M 0 374M 0% /run/user/0 /dev/mapper/myvg-mylv xfs 2.0G 47M 2.0G 3% /mnt/mnt1

Ressources supplémentaires

-

vgextend(8),lvextend(8), andxfs_growfs(8)man pages

5.2. Shrinking logical volumes

You can reduce the size of a logical volume with the lvreduce command.

Shrinking is not supported on a GFS2 or XFS file system, so you cannot reduce the size of a logical volume that contains a GFS2 or XFS file system.

If the logical volume you are reducing contains a file system, to prevent data loss you must ensure that the file system is not using the space in the logical volume that is being reduced. For this reason, it is recommended that you use the --resizefs option of the lvreduce command when the logical volume contains a file system.

When you use this option, the lvreduce command attempts to reduce the file system before shrinking the logical volume. If shrinking the file system fails, as can occur if the file system is full or the file system does not support shrinking, then the lvreduce command will fail and not attempt to shrink the logical volume.

In most cases, the lvreduce command warns about possible data loss and asks for a confirmation. However, you should not rely on these confirmation prompts to prevent data loss because in some cases you will not see these prompts, such as when the logical volume is inactive or the --resizefs option is not used.

Note that using the --test option of the lvreduce command does not indicate where the operation is safe, as this option does not check the file system or test the file system resize.

Procédure

To shrink the mylv logical volume in myvg volume group to 64 megabytes, use the following command:

# lvreduce --resizefs -L 64M myvg/mylv fsck from util-linux 2.37.2 /dev/mapper/myvg-mylv: clean, 11/25688 files, 4800/102400 blocks resize2fs 1.46.2 (28-Feb-2021) Resizing the filesystem on /dev/mapper/myvg-mylv to 65536 (1k) blocks. The filesystem on /dev/mapper/myvg-mylv is now 65536 (1k) blocks long. Size of logical volume myvg/mylv changed from 100.00 MiB (25 extents) to 64.00 MiB (16 extents). Logical volume myvg/mylv successfully resized.

In this example, mylv contains a file system, which this command resizes together with the logical volume.

Specifying the

-sign before the resize value indicates that the value will be subtracted from the logical volume’s actual size. To shrink a logical volume to an absolute size of 64 megabytes, use the following command:# lvreduce --resizefs -L -64M myvg/mylv

Ressources supplémentaires

-

lvreduce(8)man page

Chapitre 6. Configuring RAID logical volumes

You can create, activate, change, remove, display, and use LVM Redundant Array of Independent Disks (RAID) volumes.

6.1. RAID logical volumes

Logical volume manager (LVM) supports Redundant Array of Independent Disks (RAID) levels 0, 1, 4, 5, 6, and 10. An LVM RAID volume has the following characteristics:

- LVM creates and manages RAID logical volumes that leverage the Multiple Devices (MD) kernel drivers.

- You can temporarily split RAID1 images from the array and merge them back into the array later.

- LVM RAID volumes support snapshots.

Other characteristics include:

- Clusters

RAID logical volumes are not cluster-aware.

Although you can create and activate RAID logical volumes exclusively on one machine, you cannot activate them simultaneously on more than one machine.

- Subvolumes

When you create a RAID logical volume (LV), LVM creates a metadata subvolume that is one extent in size for every data or parity subvolume in the array.

For example, creating a 2-way RAID1 array results in two metadata subvolumes (

lv_rmeta_0andlv_rmeta_1) and two data subvolumes (lv_rimage_0andlv_rimage_1). Similarly, creating a 3-way stripe and one implicit parity device, RAID4 results in four metadata subvolumes (lv_rmeta_0,lv_rmeta_1,lv_rmeta_2, andlv_rmeta_3) and four data subvolumes (lv_rimage_0,lv_rimage_1,lv_rimage_2, andlv_rimage_3).- Integrity

- You can lose data when a RAID device fails or when soft corruption occurs. Soft corruption in data storage implies that the data retrieved from a storage device is different from the data written to that device. Adding integrity to a RAID LV reduces or prevent soft corruption. For more information, see Creating a RAID LV with DM integrity.

6.2. RAID levels and linear support

The following are the supported configurations by RAID, including levels 0, 1, 4, 5, 6, 10, and linear:

- Niveau 0

RAID level 0, often called striping, is a performance-oriented striped data mapping technique. This means the data being written to the array is broken down into stripes and written across the member disks of the array, allowing high I/O performance at low inherent cost but provides no redundancy.

RAID level 0 implementations only stripe the data across the member devices up to the size of the smallest device in the array. This means that if you have multiple devices with slightly different sizes, each device gets treated as though it was the same size as the smallest drive. Therefore, the common storage capacity of a level 0 array is the total capacity of all disks. If the member disks have a different size, then the RAID0 uses all the space of those disks using the available zones.

- Niveau 1

RAID level 1, or mirroring, provides redundancy by writing identical data to each member disk of the array, leaving a mirrored copy on each disk. Mirroring remains popular due to its simplicity and high level of data availability. Level 1 operates with two or more disks, and provides very good data reliability and improves performance for read-intensive applications but at relatively high costs.

RAID level 1 is costly because you write the same information to all of the disks in the array, which provides data reliability, but in a much less space-efficient manner than parity based RAID levels such as level 5. However, this space inefficiency comes with a performance benefit, which is parity-based RAID levels that consume considerably more CPU power in order to generate the parity while RAID level 1 simply writes the same data more than once to the multiple RAID members with very little CPU overhead. As such, RAID level 1 can outperform the parity-based RAID levels on machines where software RAID is employed and CPU resources on the machine are consistently taxed with operations other than RAID activities.

The storage capacity of the level 1 array is equal to the capacity of the smallest mirrored hard disk in a hardware RAID or the smallest mirrored partition in a software RAID. Level 1 redundancy is the highest possible among all RAID types, with the array being able to operate with only a single disk present.

- Niveau 4

Level 4 uses parity concentrated on a single disk drive to protect data. Parity information is calculated based on the content of the rest of the member disks in the array. This information can then be used to reconstruct data when one disk in the array fails. The reconstructed data can then be used to satisfy I/O requests to the failed disk before it is replaced and to repopulate the failed disk after it has been replaced.

Since the dedicated parity disk represents an inherent bottleneck on all write transactions to the RAID array, level 4 is seldom used without accompanying technologies such as write-back caching. Or it is used in specific circumstances where the system administrator is intentionally designing the software RAID device with this bottleneck in mind such as an array that has little to no write transactions once the array is populated with data. RAID level 4 is so rarely used that it is not available as an option in Anaconda. However, it could be created manually by the user if needed.

The storage capacity of hardware RAID level 4 is equal to the capacity of the smallest member partition multiplied by the number of partitions minus one. The performance of a RAID level 4 array is always asymmetrical, which means reads outperform writes. This is because write operations consume extra CPU resources and main memory bandwidth when generating parity, and then also consume extra bus bandwidth when writing the actual data to disks because you are not only writing the data, but also the parity. Read operations need only read the data and not the parity unless the array is in a degraded state. As a result, read operations generate less traffic to the drives and across the buses of the computer for the same amount of data transfer under normal operating conditions.

- Niveau 5

This is the most common type of RAID. By distributing parity across all the member disk drives of an array, RAID level 5 eliminates the write bottleneck inherent in level 4. The only performance bottleneck is the parity calculation process itself. Modern CPUs can calculate parity very fast. However, if you have a large number of disks in a RAID 5 array such that the combined aggregate data transfer speed across all devices is high enough, parity calculation can be a bottleneck.

Level 5 has asymmetrical performance, and reads substantially outperforming writes. The storage capacity of RAID level 5 is calculated the same way as with level 4.

- Level 6

This is a common level of RAID when data redundancy and preservation, and not performance, are the paramount concerns, but where the space inefficiency of level 1 is not acceptable. Level 6 uses a complex parity scheme to be able to recover from the loss of any two drives in the array. This complex parity scheme creates a significantly higher CPU burden on software RAID devices and also imposes an increased burden during write transactions. As such, level 6 is considerably more asymmetrical in performance than levels 4 and 5.

The total capacity of a RAID level 6 array is calculated similarly to RAID level 5 and 4, except that you must subtract two devices instead of one from the device count for the extra parity storage space.

- Level 10

This RAID level attempts to combine the performance advantages of level 0 with the redundancy of level 1. It also reduces some of the space wasted in level 1 arrays with more than two devices. With level 10, it is possible, for example, to create a 3-drive array configured to store only two copies of each piece of data, which then allows the overall array size to be 1.5 times the size of the smallest devices instead of only equal to the smallest device, similar to a 3-device, level 1 array. This avoids CPU process usage to calculate parity similar to RAID level 6, but it is less space efficient.

The creation of RAID level 10 is not supported during installation. It is possible to create one manually after installation.

- Linear RAID

Linear RAID is a grouping of drives to create a larger virtual drive.

In linear RAID, the chunks are allocated sequentially from one member drive, going to the next drive only when the first is completely filled. This grouping provides no performance benefit, as it is unlikely that any I/O operations split between member drives. Linear RAID also offers no redundancy and decreases reliability. If any one member drive fails, the entire array cannot be used and data can be lost. The capacity is the total of all member disks.

6.3. LVM RAID segment types

To create a RAID logical volume, you can specify a RAID type by using the --type argument of the lvcreate command. For most users, specifying one of the five available primary types, which are raid1, raid4, raid5, raid6, and raid10, should be sufficient.

The following table describes the possible RAID segment types.

Tableau 6.1. LVM RAID segment types

| Segment type | Description |

|---|---|

|

|

RAID1 mirroring. This is the default value for the |

|

| RAID4 dedicated parity disk. |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| Striping. RAID0 spreads logical volume data across multiple data subvolumes in units of stripe size. This is used to increase performance. Logical volume data is lost if any of the data subvolumes fail. |

6.4. Creating RAID logical volumes

You can create RAID1 arrays with multiple numbers of copies, according to the value you specify for the -m argument. Similarly, you can specify the number of stripes for a RAID 0, 4, 5, 6, and 10 logical volume with the -i argument. You can also specify the stripe size with the -I argument. The following procedure describes different ways to create different types of RAID logical volume.

Procédure

Create a 2-way RAID. The following command creates a 2-way RAID1 array, named my_lv, in the volume group my_vg, that is 1G in size:

# lvcreate --type raid1 -m 1 -L 1G -n my_lv my_vg Logical volume "my_lv" created.

Create a RAID5 array with stripes. The following command creates a RAID5 array with three stripes and one implicit parity drive, named my_lv, in the volume group my_vg, that is 1G in size. Note that you can specify the number of stripes similar to an LVM striped volume. The correct number of parity drives is added automatically.

# lvcreate --type raid5 -i 3 -L 1G -n my_lv my_vg

Create a RAID6 array with stripes. The following command creates a RAID6 array with three 3 stripes and two implicit parity drives, named my_lv, in the volume group my_vg, that is 1G one gigabyte in size:

# lvcreate --type raid6 -i 3 -L 1G -n my_lv my_vg

Vérification

- Display the LVM device my_vg/my_lv, which is a 2-way RAID1 array:

# lvs -a -o name,copy_percent,devices _my_vg_ LV Copy% Devices my_lv 6.25 my_lv_rimage_0(0),my_lv_rimage_1(0) [my_lv_rimage_0] /dev/sde1(0) [my_lv_rimage_1] /dev/sdf1(1) [my_lv_rmeta_0] /dev/sde1(256) [my_lv_rmeta_1] /dev/sdf1(0)

Ressources supplémentaires

-

lvcreate(8)andlvmraid(7)man pages

6.5. Creating a RAID0 striped logical volume

A RAID0 logical volume spreads logical volume data across multiple data subvolumes in units of stripe size. The following procedure creates an LVM RAID0 logical volume called mylv that stripes data across the disks.

Conditions préalables

- You have created three or more physical volumes. For more information on creating physical volumes, see Creating LVM physical volume.

- You have created the volume group. For more information, see Creating LVM volume group.

Procédure

Create a RAID0 logical volume from the existing volume group. The following command creates the RAID0 volume mylv from the volume group myvg, which is 2G in size, with three stripes and a stripe size of 4kB:

# lvcreate --type raid0 -L 2G --stripes 3 --stripesize 4 -n mylv my_vg Rounding size 2.00 GiB (512 extents) up to stripe boundary size 2.00 GiB(513 extents). Logical volume "mylv" created.

Create a file system on the RAID0 logical volume. The following command creates an ext4 file system on the logical volume:

# mkfs.ext4 /dev/my_vg/mylvMount the logical volume and report the file system disk space usage:

# mount /dev/my_vg/mylv /mnt # df Filesystem 1K-blocks Used Available Use% Mounted on /dev/mapper/my_vg-mylv 2002684 6168 1875072 1% /mnt

Vérification

View the created RAID0 stripped logical volume:

# lvs -a -o +devices,segtype my_vg LV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convert Devices Type mylv my_vg rwi-a-r--- 2.00g mylv_rimage_0(0),mylv_rimage_1(0),mylv_rimage_2(0) raid0 [mylv_rimage_0] my_vg iwi-aor--- 684.00m /dev/sdf1(0) linear [mylv_rimage_1] my_vg iwi-aor--- 684.00m /dev/sdg1(0) linear [mylv_rimage_2] my_vg iwi-aor--- 684.00m /dev/sdh1(0) linear

6.6. Parameters for creating a RAID0

You can create a RAID0 striped logical volume using the lvcreate --type raid0[meta] --stripes _Stripes --stripesize StripeSize VolumeGroup [PhysicalVolumePath] command.

The following table describes different parameters, which you can use while creating a RAID0 striped logical volume.

Tableau 6.2. Parameters for creating a RAID0 striped logical volume

| Paramètres | Description |

|---|---|

|

|

Specifying |

|

| Specifies the number of devices to spread the logical volume across. |

|

| Specifies the size of each stripe in kilobytes. This is the amount of data that is written to one device before moving to the next device. |

|

| Specifies the volume group to use. |

|

| Specifies the devices to use. If this is not specified, LVM will choose the number of devices specified by the Stripes option, one for each stripe. |

6.7. Soft data corruption

Soft corruption in data storage implies that the data retrieved from a storage device is different from the data written to that device. The corrupted data can exist indefinitely on storage devices. You might not discover this corrupted data until you retrieve and attempt to use this data.

Depending on the type of configuration, a Redundant Array of Independent Disks (RAID) logical volume(LV) prevents data loss when a device fails. If a device consisting of a RAID array fails, the data can be recovered from other devices that are part of that RAID LV. However, a RAID configuration does not ensure the integrity of the data itself. Soft corruption, silent corruption, soft errors, and silent errors are terms that describe data that has become corrupted, even if the system design and software continues to function as expected.

Device mapper (DM) integrity is used with RAID levels 1, 4, 5, 6, and 10 to mitigate or prevent data loss due to soft corruption. The RAID layer ensures that a non-corrupted copy of the data can fix the soft corruption errors. The integrity layer sits above each RAID image while an extra sub LV stores the integrity metadata or data checksums for each RAID image. When you retrieve data from an RAID LV with integrity, the integrity data checksums analyze the data for corruption. If corruption is detected, the integrity layer returns an error message, and the RAID layer retrieves a non-corrupted copy of the data from another RAID image. The RAID layer automatically rewrites non-corrupted data over the corrupted data to repair the soft corruption.

When creating a new RAID LV with DM integrity or adding integrity to an existing RAID LV, consider the following points:

- The integrity metadata requires additional storage space. For each RAID image, every 500MB data requires 4MB of additional storage space because of the checksums that get added to the data.

- While some RAID configurations are impacted more than others, adding DM integrity impacts performance due to latency when accessing the data. A RAID1 configuration typically offers better performance than RAID5 or its variants.

- The RAID integrity block size also impacts performance. Configuring a larger RAID integrity block size offers better performance. However, a smaller RAID integrity block size offers greater backward compatibility.

-

There are two integrity modes available:

bitmaporjournal. Thebitmapintegrity mode typically offers better performance thanjournalmode.

If you experience performance issues, either use RAID1 with integrity or test the performance of a particular RAID configuration to ensure that it meets your requirements.

6.8. Creating a RAID LV with DM integrity

When you create a RAID LV with device mapper (DM) integrity or add integrity to an existing RAID LV, it mitigates the risk of losing data due to soft corruption. Wait for the integrity synchronization and the RAID metadata to complete before using the LV. Otherwise, the background initialization might impact the LV’s performance.

Procédure

Create a RAID LV with DM integrity. The following example creates a new RAID LV with integrity named test-lv in the my_vg volume group, with a usable size of 256M and RAID level 1:

# lvcreate --type raid1 --raidintegrity y -L 256M -n test-lv my_vg Creating integrity metadata LV test-lv_rimage_0_imeta with size 8.00 MiB. Logical volume "test-lv_rimage_0_imeta" created. Creating integrity metadata LV test-lv_rimage_1_imeta with size 8.00 MiB. Logical volume "test-lv_rimage_1_imeta" created. Logical volume "test-lv" created.

NoteTo add DM integrity to an existing RAID LV, use the following command:

# lvconvert --raidintegrity y my_vg/test-lvAdding integrity to a RAID LV limits the number of operations that you can perform on that RAID LV.

Optional: Remove the integrity before performing certain operations.

# lvconvert --raidintegrity n my_vg/test-lv Logical volume my_vg/test-lv has removed integrity.

Vérification

View information about the added DM integrity:

View information about the test-lv RAID LV that was created in the my_vg volume group:

# lvs -a my_vg LV VG Attr LSize Origin Cpy%Sync test-lv my_vg rwi-a-r--- 256.00m 2.10 [test-lv_rimage_0] my_vg gwi-aor--- 256.00m [test-lv_rimage_0_iorig] 93.75 [test-lv_rimage_0_imeta] my_vg ewi-ao---- 8.00m [test-lv_rimage_0_iorig] my_vg -wi-ao---- 256.00m [test-lv_rimage_1] my_vg gwi-aor--- 256.00m [test-lv_rimage_1_iorig] 85.94 [...]The following describes different options from this output:

gattribute-

It is the list of attributes under the Attr column indicates that the RAID image is using integrity. The integrity stores the checksums in the

_imetaRAID LV. Cpy%Synccolumn- It indicates the synchronization progress for both the top level RAID LV and for each RAID image.

- RAID image

-

It is is indicated in the LV column by

raid_image_N. LVcolumn- It ensures that the synchronization progress displays 100% for the top level RAID LV and for each RAID image.

Display the type for each RAID LV:

# lvs -a my-vg -o+segtype LV VG Attr LSize Origin Cpy%Sync Type test-lv my_vg rwi-a-r--- 256.00m 87.96 raid1 [test-lv_rimage_0] my_vg gwi-aor--- 256.00m [test-lv_rimage_0_iorig] 100.00 integrity [test-lv_rimage_0_imeta] my_vg ewi-ao---- 8.00m linear [test-lv_rimage_0_iorig] my_vg -wi-ao---- 256.00m linear [test-lv_rimage_1] my_vg gwi-aor--- 256.00m [test-lv_rimage_1_iorig] 100.00 integrity [...]There is an incremental counter that counts the number of mismatches detected on each RAID image. View the data mismatches detected by integrity from

rimage_0under my_vg/test-lv:# lvs -o+integritymismatches my_vg/test-lv_rimage_0 LV VG Attr LSize Origin Cpy%Sync IntegMismatches [test-lv_rimage_0] my_vg gwi-aor--- 256.00m [test-lv_rimage_0_iorig] 100.00 0In this example, the integrity has not detected any data mismatches and thus the

IntegMismatchescounter shows zero (0).View the data integrity information in the

/var/log/messageslog files, as shown in the following examples:Exemple 6.1. Example of dm-integrity mismatches from the kernel message logs

device-mapper: integrity: dm-12: Checksum failed at sector 0x24e7

Exemple 6.2. Example of dm-integrity data corrections from the kernel message logs

md/raid1:mdX: read error corrected (8 sectors at 9448 on dm-16)

Ressources supplémentaires

-

lvcreate(8)andlvmraid(7)man pages

6.9. Minimum and maximum I/O rate options

When you create a RAID logical volumes, the background I/O required to initialize the logical volumes with the sync operation can expel other I/O operations to LVM devices, such as updates to volume group metadata, particularly when you are creating many RAID logical volumes. This can cause the other LVM operations to slow down.

You can control the rate at which a RAID logical volume is initialized by implementing recovery throttling. To control the rate at which sync operations are performed, set the minimum and maximum I/O rate for those operations with the --minrecoveryrate and --maxrecoveryrate options of the lvcreate command.

You can specify these options as follows:

--maxrecoveryrate Rate[bBsSkKmMgG]- Sets the maximum recovery rate for a RAID logical volume so that it will not expel nominal I/O operations. Specify the Rate as an amount per second for each device in the array. If you do not provide a suffix, then it assumes kiB/sec/device. Setting the recovery rate to 0 means it will be unbounded.

--minrecoveryrate Rate[bBsSkKmMgG]- Sets the minimum recovery rate for a RAID logical volume to ensure that I/O for sync operations achieves a minimum throughput, even when heavy nominal I/O is present. Specify the Rate as an amount per second for each device in the array. If you do not give a suffix, then it assumes kiB/sec/device.

For example, use the lvcreate --type raid10 -i 2 -m 1 -L 10G --maxrecoveryrate 128 -n my_lv my_vg command to create a 2-way RAID10 array my_lv, which is in the volume group my_vg with 3 stripes that is 10G in size with a maximum recovery rate of 128 kiB/sec/device. You can also specify minimum and maximum recovery rates for a RAID scrubbing operation.

6.10. Converting a Linear device to a RAID logical volume

You can convert an existing linear logical volume to a RAID logical volume. To perform this operation, use the --type argument of the lvconvert command.

RAID logical volumes are composed of metadata and data subvolume pairs. When you convert a linear device to a RAID1 array, it creates a new metadata subvolume and associates it with the original logical volume on one of the same physical volumes that the linear volume is on. The additional images are added in a metadata/data subvolume pair. If the metadata image that pairs with the original logical volume cannot be placed on the same physical volume, the lvconvert fails.

Procédure

View the logical volume device that needs to be converted:

# lvs -a -o name,copy_percent,devices my_vg LV Copy% Devices my_lv /dev/sde1(0)Convert the linear logical volume to a RAID device. The following command converts the linear logical volume my_lv in volume group __my_vg, to a 2-way RAID1 array:

# lvconvert --type raid1 -m 1 my_vg/my_lv Are you sure you want to convert linear LV my_vg/my_lv to raid1 with 2 images enhancing resilience? [y/n]: y Logical volume my_vg/my_lv successfully converted.

Vérification

Ensure if the logical volume is converted to a RAID device:

# lvs -a -o name,copy_percent,devices my_vg LV Copy% Devices my_lv 6.25 my_lv_rimage_0(0),my_lv_rimage_1(0) [my_lv_rimage_0] /dev/sde1(0) [my_lv_rimage_1] /dev/sdf1(1) [my_lv_rmeta_0] /dev/sde1(256) [my_lv_rmeta_1] /dev/sdf1(0)

Ressources supplémentaires

-

The

lvconvert(8)man page

6.11. Converting an LVM RAID1 logical volume to an LVM linear logical volume

You can convert an existing RAID1 LVM logical volume to an LVM linear logical volume. To perform this operation, use the lvconvert command and specify the -m0 argument. This removes all the RAID data subvolumes and all the RAID metadata subvolumes that make up the RAID array, leaving the top-level RAID1 image as the linear logical volume.

Procédure

Display an existing LVM RAID1 logical volume:

# lvs -a -o name,copy_percent,devices my_vg LV Copy% Devices my_lv 100.00 my_lv_rimage_0(0),my_lv_rimage_1(0) [my_lv_rimage_0] /dev/sde1(1) [my_lv_rimage_1] /dev/sdf1(1) [my_lv_rmeta_0] /dev/sde1(0) [my_lv_rmeta_1] /dev/sdf1(0)Convert an existing RAID1 LVM logical volume to an LVM linear logical volume. The following command converts the LVM RAID1 logical volume my_vg/my_lv to an LVM linear device:

# lvconvert -m0 my_vg/my_lv Are you sure you want to convert raid1 LV my_vg/my_lv to type linear losing all resilience? [y/n]: y Logical volume my_vg/my_lv successfully converted.When you convert an LVM RAID1 logical volume to an LVM linear volume, you can also specify which physical volumes to remove. In the following example, the

lvconvertcommand specifies that you want to remove /dev/sde1, leaving /dev/sdf1 as the physical volume that makes up the linear device:# lvconvert -m0 my_vg/my_lv /dev/sde1

Vérification

Verify if the RAID1 logical volume was converted to an LVM linear device:

# lvs -a -o name,copy_percent,devices my_vg LV Copy% Devices my_lv /dev/sdf1(1)

Ressources supplémentaires

-

The

lvconvert(8)man page

6.12. Converting a mirrored LVM device to a RAID1 logical volume

You can convert an existing mirrored LVM device with a segment type mirror to a RAID1 LVM device. To perform this operation, use the lvconvert command with the --type raid1 argument. This renames the mirror subvolumes named mimage to RAID subvolumes named rimage.

In addition, it also removes the mirror log and and creates metadata subvolumes named rmeta for the data subvolumes on the same physical volumes as the corresponding data subvolumes.

Procédure

View the layout of a mirrored logical volume my_vg/my_lv:

# lvs -a -o name,copy_percent,devices my_vg LV Copy% Devices my_lv 15.20 my_lv_mimage_0(0),my_lv_mimage_1(0) [my_lv_mimage_0] /dev/sde1(0) [my_lv_mimage_1] /dev/sdf1(0) [my_lv_mlog] /dev/sdd1(0)Convert the mirrored logical volume my_vg/my_lv to a RAID1 logical volume:

# lvconvert --type raid1 my_vg/my_lv Are you sure you want to convert mirror LV my_vg/my_lv to raid1 type? [y/n]: y Logical volume my_vg/my_lv successfully converted.

Vérification

Verify if the mirrored logical volume is converted to a RAID1 logical volume:

# lvs -a -o name,copy_percent,devices my_vg LV Copy% Devices my_lv 100.00 my_lv_rimage_0(0),my_lv_rimage_1(0) [my_lv_rimage_0] /dev/sde1(0) [my_lv_rimage_1] /dev/sdf1(0) [my_lv_rmeta_0] /dev/sde1(125) [my_lv_rmeta_1] /dev/sdf1(125)

Ressources supplémentaires

-

The

lvconvert(8)man page

6.13. Resizing a RAID logical volume

You can resize a RAID logical volume in the following ways;

-

You can increase the size of a RAID logical volume of any type with the

lvresizeorlvextendcommand. This does not change the number of RAID images. For striped RAID logical volumes the same stripe rounding constraints apply as when you create a striped RAID logical volume.

-

You can reduce the size of a RAID logical volume of any type with the

lvresizeorlvreducecommand. This does not change the number of RAID images. As with thelvextendcommand, the same stripe rounding constraints apply as when you create a striped RAID logical volume.

-

You can change the number of stripes on a striped RAID logical volume (

raid4/5/6/10) with the--stripes Nparameter of thelvconvertcommand. This increases or reduces the size of the RAID logical volume by the capacity of the stripes added or removed. Note thatraid10volumes are capable only of adding stripes. This capability is part of the RAID reshaping feature that allows you to change attributes of a RAID logical volume while keeping the same RAID level. For information on RAID reshaping and examples of using thelvconvertcommand to reshape a RAID logical volume, see thelvmraid(7) man page.

6.14. Changing the number of images in an existing RAID1 device

You can change the number of images in an existing RAID1 array, similar to the way you can change the number of images in the implementation of LVM mirroring.

When you add images to a RAID1 logical volume with the lvconvert command, you can perform the following operations:

- specify the total number of images for the resulting device,

- how many images to add to the device, and

- can optionally specify on which physical volumes the new metadata/data image pairs reside.

Procédure

Display the LVM device my_vg/my_lv, which is a 2-way RAID1 array:

# lvs -a -o name,copy_percent,devices my_vg LV Copy% Devices my_lv 6.25 my_lv_rimage_0(0),my_lv_rimage_1(0) [my_lv_rimage_0] /dev/sde1(0) [my_lv_rimage_1] /dev/sdf1(1) [my_lv_rmeta_0] /dev/sde1(256) [my_lv_rmeta_1] /dev/sdf1(0)Metadata subvolumes named

rmetaalways exist on the same physical devices as their data subvolume counterpartsrimage. The metadata/data subvolume pairs will not be created on the same physical volumes as those from another metadata/data subvolume pair in the RAID array unless you specify--allocanywhere.Convert the 2-way RAID1 logical volume my_vg/my_lv to a 3-way RAID1 logical volume:

# lvconvert -m 2 my_vg/my_lv Are you sure you want to convert raid1 LV my_vg/my_lv to 3 images enhancing resilience? [y/n]: y Logical volume my_vg/my_lv successfully converted.

The following are a few examples of changing the number of images in an existing RAID1 device:

You can also specify which physical volumes to use while adding an image to RAID. The following command converts the 2-way RAID1 logical volume my_vg/my_lv to a 3-way RAID1 logical volume, specifying that the physical volume /dev/sdd1 be used for the array:

# lvconvert -m 2 my_vg/my_lv /dev/sdd1Convert the 3-way RAID1 logical volume into a 2-way RAID1 logical volume:

# lvconvert -m1 my_vg/my_lv Are you sure you want to convert raid1 LV my_vg/my_lv to 2 images reducing resilience? [y/n]: y Logical volume my_vg/my_lv successfully converted.

Convert the 3-way RAID1 logical volume into a 2-way RAID1 logical volume by specifying the physical volume /dev/sde1, which contains the image to remove:

# lvconvert -m1 my_vg/my_lv /dev/sde1Additionally, when you remove an image and its associated metadata subvolume volume, any higher-numbered images will be shifted down to fill the slot. Removing

lv_rimage_1from a 3-way RAID1 array that consists oflv_rimage_0,lv_rimage_1, andlv_rimage_2results in a RAID1 array that consists oflv_rimage_0andlv_rimage_1. The subvolumelv_rimage_2will be renamed and take over the empty slot, becominglv_rimage_1.

Vérification

View the RAID1 device after changing the number of images in an existing RAID1 device:

# lvs -a -o name,copy_percent,devices my_vg LV Cpy%Sync Devices my_lv 100.00 my_lv_rimage_0(0),my_lv_rimage_1(0),my_lv_rimage_2(0) [my_lv_rimage_0] /dev/sdd1(1) [my_lv_rimage_1] /dev/sde1(1) [my_lv_rimage_2] /dev/sdf1(1) [my_lv_rmeta_0] /dev/sdd1(0) [my_lv_rmeta_1] /dev/sde1(0) [my_lv_rmeta_2] /dev/sdf1(0)

Ressources supplémentaires

-

The

lvconvert(8)man page

6.15. Splitting off a RAID image as a separate logical volume

You can split off an image of a RAID logical volume to form a new logical volume. When you are removing a RAID image from an existing RAID1 logical volume or removing a RAID data subvolume and its associated metadata subvolume from the middle of the device, any higher numbered images will be shifted down to fill the slot. The index numbers on the logical volumes that make up a RAID array will thus be an unbroken sequence of integers.

You cannot split off a RAID image if the RAID1 array is not yet in sync.

Procédure

Display the LVM device my_vg/my_lv, which is a 2-way RAID1 array:

# lvs -a -o name,copy_percent,devices my_vg LV Copy% Devices my_lv 12.00 my_lv_rimage_0(0),my_lv_rimage_1(0) [my_lv_rimage_0] /dev/sde1(1) [my_lv_rimage_1] /dev/sdf1(1) [my_lv_rmeta_0] /dev/sde1(0) [my_lv_rmeta_1] /dev/sdf1(0)Split the RAID image into a separate logical volume. The following example splits a 2-way RAID1 logical volume, my_lv, into two linear logical volumes, my_lv and new:

# lvconvert --splitmirror 1 -n new my_vg/my_lv Are you sure you want to split raid1 LV my_vg/my_lv losing all resilience? [y/n]: y

Split a 3-way RAID1 logical volume, my_lv, into a 2-way RAID1 logical volume, my_lv, and a linear logical volume, new:

# lvconvert --splitmirror 1 -n new my_vg/my_lv

Vérification

View the logical volume after you split off an image of a RAID logical volume:

# lvs -a -o name,copy_percent,devices my_vg LV Copy% Devices my_lv /dev/sde1(1) new /dev/sdf1(1)

Ressources supplémentaires

-

The

lvconvert(8)man page

6.16. Splitting and Merging a RAID Image

You can temporarily split off an image of a RAID1 array for read-only use while tracking any changes by using the --trackchanges argument with the --splitmirrors argument of the lvconvert command. Using this feature, you can merge the image into an array at a later time while resyncing only those portions of the array that have changed since the image was split.

When you split off a RAID image with the --trackchanges argument, you can specify which image to split but you cannot change the name of the volume being split. In addition, the resulting volumes have the following constraints:

- The new volume you create is read-only.

- You cannot resize the new volume.

- You cannot rename the remaining array.

- You cannot resize the remaining array.

- You can activate the new volume and the remaining array independently.

You can merge an image that was split off. When you merge the image, only the portions of the array that have changed since the image was split are resynced.

Procédure

Create a RAID logical volume:

# lvcreate --type raid1 -m 2 -L 1G -n my_lv my_vg Logical volume "my_lv" created

Optional: View the created RAID logical volume:

# lvs -a -o name,copy_percent,devices my_vg LV Copy% Devices my_lv 100.00 my_lv_rimage_0(0),my_lv_rimage_1(0),my_lv_rimage_2(0) [my_lv_rimage_0] /dev/sdb1(1) [my_lv_rimage_1] /dev/sdc1(1) [my_lv_rimage_2] /dev/sdd1(1) [my_lv_rmeta_0] /dev/sdb1(0) [my_lv_rmeta_1] /dev/sdc1(0) [my_lv_rmeta_2] /dev/sdd1(0)Split an image from the created RAID logical volume and track the changes to the remaining array:

# lvconvert --splitmirrors 1 --trackchanges my_vg/my_lv my_lv_rimage_2 split from my_lv for read-only purposes. Use 'lvconvert --merge my_vg/my_lv_rimage_2' to merge back into my_lvOptional: View the logical volume after splitting the image:

# lvs -a -o name,copy_percent,devices my_vg LV Copy% Devices my_lv 100.00 my_lv_rimage_0(0),my_lv_rimage_1(0) [my_lv_rimage_0] /dev/sdc1(1) [my_lv_rimage_1] /dev/sdd1(1) [my_lv_rmeta_0] /dev/sdc1(0) [my_lv_rmeta_1] /dev/sdd1(0)Merge the volume back into the array:

# lvconvert --merge my_vg/my_lv_rimage_1 my_vg/my_lv_rimage_1 successfully merged back into my_vg/my_lv

Vérification

View the merged logical volume:

# lvs -a -o name,copy_percent,devices my_vg LV Copy% Devices my_lv 100.00 my_lv_rimage_0(0),my_lv_rimage_1(0) [my_lv_rimage_0] /dev/sdc1(1) [my_lv_rimage_1] /dev/sdd1(1) [my_lv_rmeta_0] /dev/sdc1(0) [my_lv_rmeta_1] /dev/sdd1(0)

Ressources supplémentaires

-

The

lvconvert(8)man page

Chapitre 7. Snapshot of logical volumes

Using the LVM snapshot feature, you can create virtual images of a volume, for example, /dev/sda, at a particular instant without causing a service interruption.

7.1. Overview of snapshot volumes

When you modify the original volume (the origin) after you take a snapshot, the snapshot feature makes a copy of the modified data area as it was prior to the change so that it can reconstruct the state of the volume. When you create a snapshot, full read and write access to the origin stays possible.

Since a snapshot copies only the data areas that change after the snapshot is created, the snapshot feature requires a minimal amount of storage. For example, with a rarely updated origin, 3-5 % of the origin’s capacity is sufficient to maintain the snapshot. It does not provide a substitute for a backup procedure. Snapshot copies are virtual copies and are not an actual media backup.

The size of the snapshot controls the amount of space set aside for storing the changes to the origin volume. For example, if you create a snapshot and then completely overwrite the origin, the snapshot should be at least as big as the origin volume to hold the changes. You should regularly monitor the size of the snapshot. For example, a short-lived snapshot of a read-mostly volume, such as /usr, would need less space than a long-lived snapshot of a volume because it contains many writes, such as /home.

If a snapshot is full, the snapshot becomes invalid because it can no longer track changes on the origin volume. But you can configure LVM to automatically extend a snapshot whenever its usage exceeds the snapshot_autoextend_threshold value to avoid snapshot becoming invalid. Snapshots are fully resizable and you can perform the following operations:

- If you have the storage capacity, you can increase the size of the snapshot volume to prevent it from getting dropped.

- If the snapshot volume is larger than you need, you can reduce the size of the volume to free up space that is needed by other logical volumes.

The snapshot volume provide the following benefits:

- Most typically, you take a snapshot when you need to perform a backup on a logical volume without halting the live system that is continuously updating the data.

-

You can execute the

fsckcommand on a snapshot file system to check the file system integrity and determine if the original file system requires file system repair. - Since the snapshot is read/write, you can test applications against production data by taking a snapshot and running tests against the snapshot without touching the real data.